AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

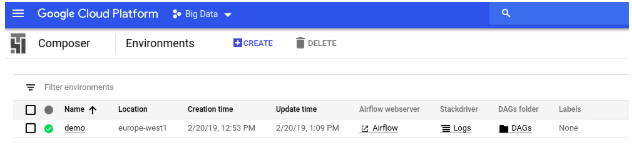

Apache airflow certification10/10/2023

Thus, after learning about DAG, it is time to install the Apache Airflow to use it when required. The schedule for running DAG is defined by the CRON expression that might consist of time tabulation in terms of minutes, weeks, or daily. Also, while running DAG it is mandatory to specify the executable file so that DAG can automatically run and process under a specified schedule. py extension, and is heavily used for orchestration with tool configuration. However, DAG is written primarily in Python and is saved as. It might also consist of defining an order of running those scripts in a unified order. The main purpose of using Airflow is to define the relationship between the dependencies and the assigned tasks which might consist of loading data before actually executing. In Airflow, these generic tasks are written as individual tasks in DAG. The Airflow tool might include some generic tasks like extracting out data with the SQL queries or doing some integrity calculation in Python and then fetching the result to be displayed in the form of tables. It can be specifically defined as a series of tasks that you want to run as part of your workflow. It is the heart of the Airflow tool in Apache. What is DAG?ĭAG abbreviates for Directed Acyclic Graph. Thus, Apache Airflow is an efficient tool to serve such tasks with ease.īefore proceeding with the installation and usages of Apache Airflow, let's first discuss some terms which are central to the tool. You can simply automate such tasks using Airflow in Apache by training your machine learning model to serve these kinds of tasks on a regular interval specified while training it.Īdditionally, Airflow allows you to easily resolve the issue of automating time-consuming and repeating task and is primarily written in SQL and Python because these languages have tremendous integration and backend support along with rich UI to identify, monitor, and debug any of the issues that may arrive with time or environment. The kind of such tasks might consist of extracting, loading, or transforming data that need a regular analytical report. Consider that you are working as a data engineer or an analyst and you might need to continuously repeat a task that needs the same effort and time every time. They are also primarily used for scheduling various tasks. Read the documentation » Python API ClientĪirflow releases official Python API client that can be used to easily interact with Airflow REST API from Python code.Īirflow releases official Go API client that can be used to easily interact with Airflow REST API from Go code.Airflow in Apache is a popularly used tool to manage the automation of tasks and their workflows. Thanks to Kubernetes, we are not tied to a specific cloud provider. You can extend and customize the image according to your requirements and use it inĪirflow has an official Helm Chart that will help you set up your own Airflow on a cloud/on-prem Kubernetes environment and leverage its scalable nature to support a large group of users. Microsoft Windows Remote Management (WinRM)Īirflow has an official Dockerfile and Docker image published in DockerHub as a convenience package for.Microsoft PowerShell Remoting Protocol (PSRP).Internet Message Access Protocol (IMAP).They are versioned and released independently of the Apache Airflow core. Providers packages include integrations with third party projects. Read the documentation » Providers packages Apache Airflow Core, which includes webserver, scheduler, CLI and other components that are needed for minimal Airflow installation.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed